(Figures

were taken from the book "Materialien für den Sekundarbereich II Biologie

- Informationsverarbeitung" von Wolfgang Miram / Dieter Krumwiede, ©1985

Schroedel Schulbuchverlag GmbH, ISBN 3-507-10517-9)

(Figures

were taken from the book "Materialien für den Sekundarbereich II Biologie

- Informationsverarbeitung" von Wolfgang Miram / Dieter Krumwiede, ©1985

Schroedel Schulbuchverlag GmbH, ISBN 3-507-10517-9)

Ear damage by MP3, DVD and digital television?

|

(v2.0) |

Unlike with compression and decompression of computer programs (e.g. ZIP), that is to say, during lossy data compression (data reduction) the original signal is not reconstructed 1:1, but to reduce the data amount, only control signals for a synthesizer programs (called CODEC) get recorded, those are optimized in a way that during rendition the CODEC can reconstruct from these an approximation of the original picture or sound signal that appears as similar as possible for the human conscious perception, but is not identical to the original signal. The danger of this exploitation of human perception flaws is that especially by lossy audio data compression sound portions get systematically destroyed, those although the brain would not pass them to the conscious awareness, are likely necessary for the human hearing's own perpetual calibration.

The basic principle of modern audio data reduction is, that is to say, to omit during storage exactly those sound portions those an average human being would not consciously perceive. The white science likes to call such methods gladly playing down "psychoacoustic", although in fact they have really nothing to do with psychology of sounds (e.g. the realization of noises as pleasant or unpleasant etc.) because their impact is on a far lower neurological level since they function on a model of the cochlea in the human ear and thus need to be called correctly "neuroacoustic" data reduction.

The cochlea

(Figures

were taken from the book "Materialien für den Sekundarbereich II Biologie

- Informationsverarbeitung" von Wolfgang Miram / Dieter Krumwiede, ©1985

Schroedel Schulbuchverlag GmbH, ISBN 3-507-10517-9) (Figures

were taken from the book "Materialien für den Sekundarbereich II Biologie

- Informationsverarbeitung" von Wolfgang Miram / Dieter Krumwiede, ©1985

Schroedel Schulbuchverlag GmbH, ISBN 3-507-10517-9) |

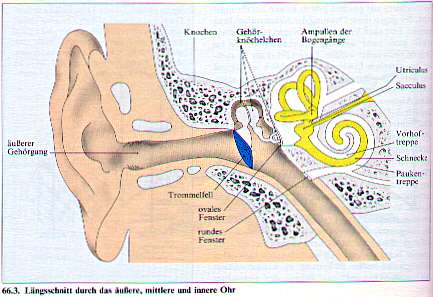

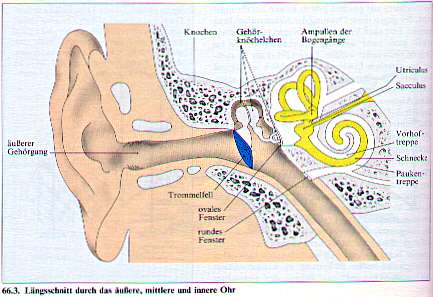

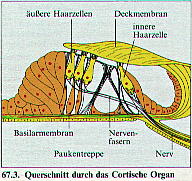

Cross- section through the cochlea (schematic). The bulge in the basilar membrane (organ of Corti) contains the sensors. These touch with their hair- shaped ends the covering tectorial membrane. |

To distinguish sounds, the basilar membrane is stretched inside along the entire tube, and at its full length their are very sensitive sensor cells attached to react on vibrations of the membrane. |

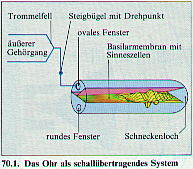

Schematic illustration of sound waves on the basilar membrane inside the cochlea tube (which in reality is conical and spiral- shaped). |

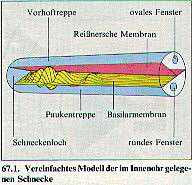

Simplified model of the cochlea. |

|

Depending upon the current frequency portion, by frequency depending damping the travelling waves in the conical tube form amplitude maximums at different locations of the membrane, those thereby excite different sensor cells to enable the brain to distinguish different sound pitches. |

Because the maximums of the membrane flexion are not sharply focussed at one spot but the travelling waves always also excite membrane zones nearby, and because after a loud noise after- vibrations (resounds) persist on the membrane for a short duration, the hearing processor fields* of the brain contain very finely co-ordinated compensation circuits, those filter away all weak signals from these places because the side resonances otherwise would occur in the conscious perception as annoyingly roaring interference noises. Due to these compensation circuits it is generally not possible for humans to consciously perceive quieter tones simultaneously or briefly after loud tones at a frequency nearby, because they are filtered away together with those unwanted interferences.

The lossy (neuroacoustic) audio data reduction does now basically nothing else than to simulate the interferences of the cochlea and their compensation circuits in the brain. For this it separates during recording the sounds by filters into many frequency bands and evaluates for each band how strong its perceptibility would be disturbed ("masked") by simultaneous or after- roaring loud sounds on nearby frequency bands (i.e. basilar membrane zones). Then the as best perceivable evaluated frequency bands get encoded with high sample resolution, worse perceivable frequency bands with correspondingly lower sample resolutions, and all sounds below a fixed threshold of perceptibility simply don't get encoded at all but are omitted to save memory and transmission bandwidth. During rendition the given samples are simply mixed together at their corresponding frequency bands again, whereby all sound portions those were omitted during recording stay naturally lost. Since the CODEC fools the hearing processor fields of the human brain by its "hearing- correct" selection, this way audio recordings can be reduced to less than 1/20 of their initial data amount without a noticeable loss of much quality. But a continuous consumption of datareduced audio could possibly lead to fatal consequences - this needs to be estimated particularly critical in view to the planned replacement of the analogue radio and television broadcast standards by digital successors (DAB, DVB).

Data reduction and DRM - a hazard for aural acuity?

The human hearing is an extremely finely co-ordinated cybernetic system, which is in many respects superior in its efficiency to electronic noise recognition systems - so it e.g. can successfully listen to a single voice among background noises and many simultaneous discussions, which even the most advanced manmade machines still can't compete. Like most biological systems it depends however for this on perpetual calibration by external signals (exactly like our speech ability, which as well known after deafness degenerates horribly fast into hardly understandable mumbling). Also the compensation circuits against the resonance interferences of the cochlea therefore require most likely for correct function continual calibration by a variety of naturally built up noises.

From the view of neuronomy* it is therefore

to classify, although not as acutely dangerous, at least as very precarious

that a wider and wider spreading audio transmission technology for data

reduction just systematically removes those spectral sound portions at

the auditory threshold, on those normally the hearing processor fields

of our brain decide whether they shall be perceived or filtered out, because

so the signal for their self calibration is missing, whereby at longer

term a maladjustment of the hearing processor fields can threaten. The

cochlea and its compensation circuits, that is to say, form a very complex

cybernetic system, in which not only disturbing signals are filtered out

afterward, but instead the compensation circuits in the brain continuously

send compensation signals through additional nerve lines back to the cochlea

to change the fluid pressure in the sensor cells (so-called "hair cells"**)

and this way control their sensitivity (operating point) separately for

the individual frequencies. This way maladjustments of the compensation

circuits have a direct influence on the operativeness of the cochlea itself

and thus can disturb the perceptibility of sounds already at the very beginning

of the signal processing chain.

A maladjustment here could possibly even destroy the sensor cells itself by overload, so far the compensation circuits successlessly attempt to drive the operating point of particular sensor cells higher and higher when they don't receive from these the expected signal pattern for a certain sound (e.g. because the expected spectral signal component was removed by data reduction).

Whether such destructions indeed can happen by data reduction is unknown, but all nerve cells generally react extremely delicate on overload by continuous high intensity signals, because in the cell the automatic self- repair mechanisms those are crucial for their survival run out of energy by this, which typically destroys the cell already after few minutes. And such a fatal signal could theoretically also be generated by the compensation circuits when due to data reduction they fail to detect an expected spectral sound component and therefore attempt to increase the operating point of the affected sensor cells until they finally produce a (continuous) static signal. (I don't claim here that this destruction unavoidably happens, but by the functionality of the human hearing this would be comprehensible.) To survive overloads, overloaded neurons tend to reduce their own sensitivity by removing many of their neuro- transmitter receptors as an emergency measure. When this happens with the sensor cells of the cochlea, the result would be an at least temporary worsened aural acuity.

Possible consequences of intensive consumption of datareduced audio material could therefore include ear noises (tinitus), a general degradation of the perception of quiet sounds, as well as a worsened timbre perception (a so-called "tin ear"), which would make the human of the cyberage even more insensitive than he already yet has become by the continuous mass media infotrash bombardment he is exposed to. Some listeners of MP3 music e.g. report to realize, that after longer listening of MP3 they start to perceive its typical sound flaws (so-called artifacts) also in unreduced musics, which suggests that MP3 maladjusts the hearing in a way that datareduced and unreduced sounds start to sound the same way wrongly while the hearing unlearns to recognize the difference. Actually it is still unclear whether the consequences of such maladjustments are only temporary (similarly like seeing the world discoloured in green/ red after taking off red/ green 3D glasses) or if the continuous consumption of neuroacoustically datareduced sounds can lead to long lasting or even permanent damage.

A possible advantage of the data reduction characteristic to remove all sound portions classified as "inaudible" could otherwise even be that one could clean with it supposingly contaminated audio material (as for instance propaganda from dictatorships) from so-called subliminals (i.e. hidden hypnotic suggestion messages those are intended to get into the brain without getting into conscious awareness) before listening. The sound carrier industry plans however with their DRM campaign (digital rights management) to mix into any commercially distributed audio recordings so-called "digital watermarks", those as an artificial and likewise allegedly not consciously audible sound portion shall contain digitally readable copyright information those besides copying onto analogue cassettes shall even survive the mentioned neuroacoustic data reduction. How a so persistent, artificial signal that repeats over the entire length of an recording affects brain and hearing is very uncertain, and I expect that at least the sound quality will degrade from it (much like with those artificial press faults on some "copy protected" audio CDs, those actually violate the "Red Book" standard for CDs and already therefore don't belong into commerce since these constitute defective products declared as audio CDs).

I personally own mainly cheap CDs and phono records, but almost no downloaded MP3 musics. I have however some computer games with MP3 music, but I don't play them excessively. Despite I generally listen to music only quietly, I have repeatedly tinitus; this happens particularly often when I fall asleep while watching TV, even when the sleep only lasts few minutes. I thus also suspect the data reduction in radio and TV broadcasts as a cause, not least because the hearing uses particularly the sleep for calibrating itself, during that the presence of neuroacoustically datareduced tones thus should be particularly harmful.

Nevertheless I try here in no way to demonize MP3 in the name of the sound carrier industry, because most music CDs are definitely 2 to 4 times overpriced and everybody who practices by downloading private "self law" against the sound carrier industry has my solidarity. In principle I find the possibilities of data reduction even very good, because it makes the system of music publishing more democratic, since by the internet now also hobby musicians so finally get a chance to spread their works world- wide. Even myself however would by my current knowledge still dare to publish sometime composed music pieces by me on the internet using MP3 or Vorbis/ Ogg data reduction (but with a warning hint not to listen to them excessively). In spite of this I consider the negligently increasing spread of neuroacoustic data reduction critical, since nobody has yet analyzed the health consequences, and of all by the nationally planned introduction as new TV and radio broadcast standards a future avoidance will become almost impossible. Also the more and more increasing rate of hearing damages with young people may not only originate from the high volume, but partly also from the data reduction employed in the musics they consume, because this is the first time in the history of evolution, that the human hearing becomes confronted with a quasi- intelligent technical opponent player, who similarly systematically cheats the hearing's compensation circuits and abuses them for his own purposes, like in nature the most disastrous and sick making viruses do with the human immune system.

Possibly the hazards of data reduction could be reduced already by improving

the CODECs in such a way that they replace during rendition the omitted

spectral sound portions by synthetically computed portions to emulate the

spectral behaviour of natural noises well enough for the calibration of

the hearing processor fields. But here definitely exists acute research

need, therefore I request hereby all politicians and neuroacoustics scientists

to be concerned with the danger potential of neuroacoustic data reduction

and to postpone the abolishment of the analogue radio and TV standards

until all risks have been clarified.

|

*============================================================================*

|